THE THIRD ROOM

C++

CINDER

KINECT

PROCESSING

C++

CINDER

KINECT

PROCESSING

At its core, The Third Room is an interactive musical environment that transforms a physical space into an augmented musical performance space.

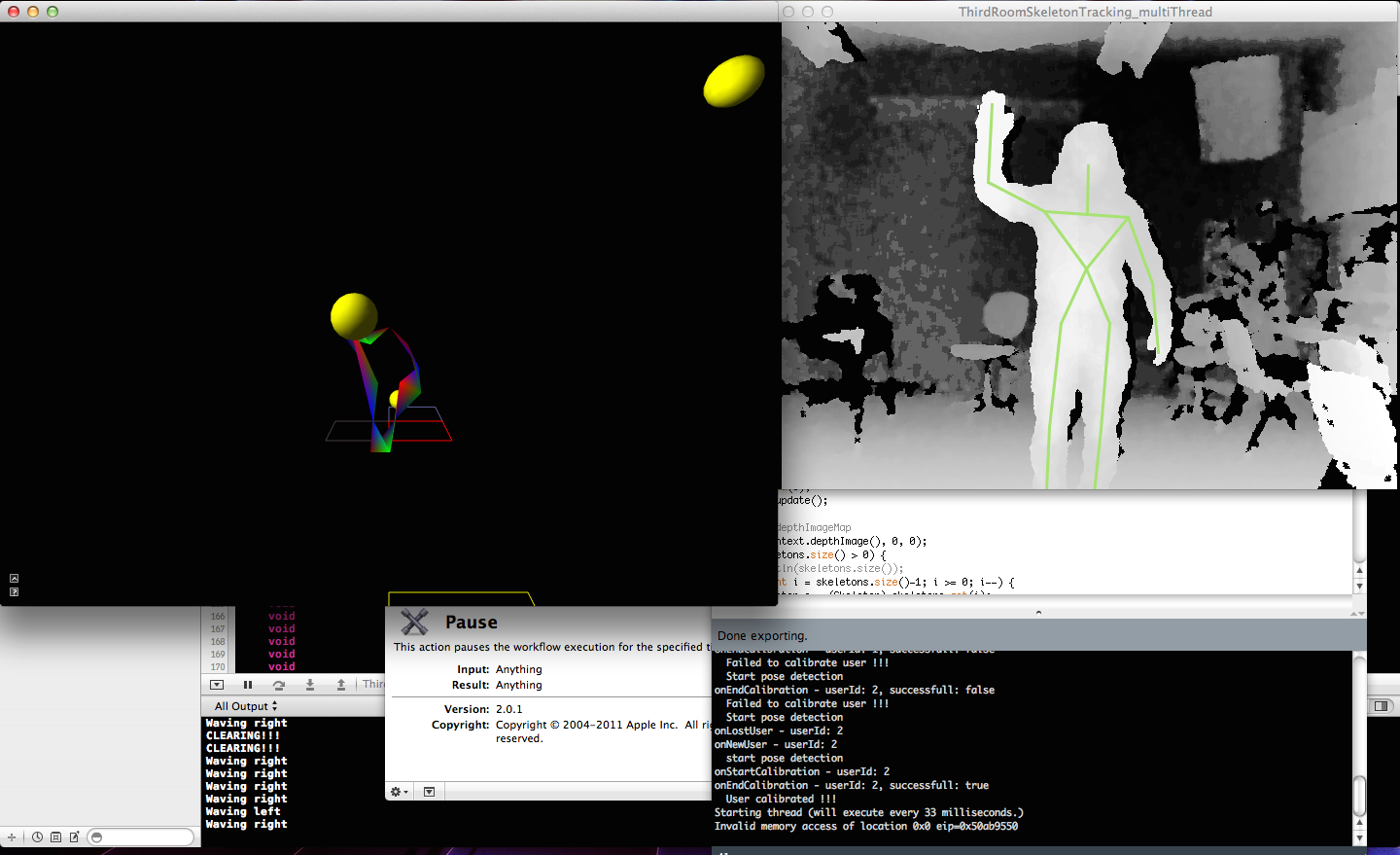

When a user enters The Third Room their body is detected by the Kinect’s depth camera. Their skeleton data is used to track their movement through the physical environment, displaying their position in the projected virtual environment and enabling virtual modes of interaction.

In the first iteration (see video below), the virtual room was pre-populated by different kinds of objects that the user could interact with, using their own body as the interface. However, it was found that users had a difficult time finding these objects given the perceptual difference of navigating a physical space while looking at a mirrored virtual space. In the final iteration, the users have control of object creation and destruction through various gestures. The virtual objects interact differently with the user and the environment, depending on their type, and each has its own sonic characteristics and/or affects the sound of other objects.

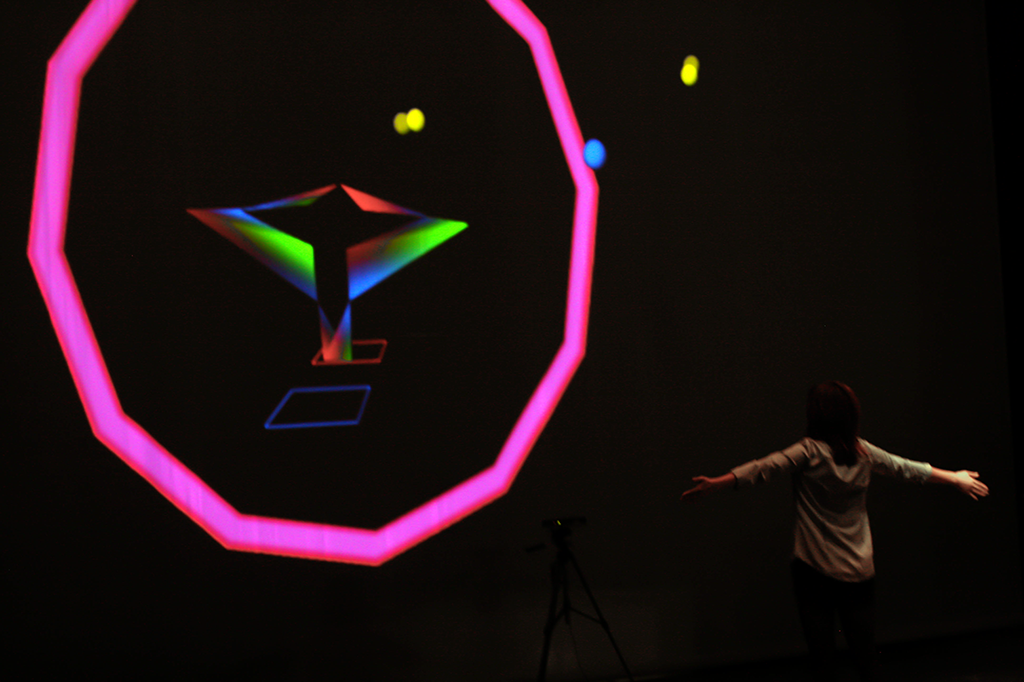

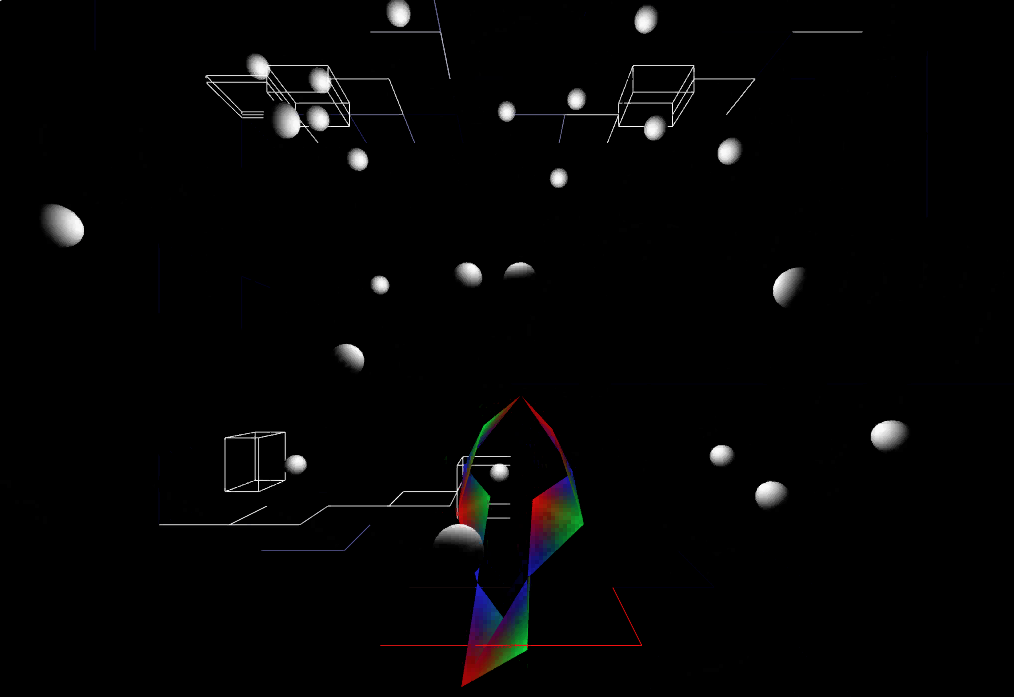

The Third Room - First Iteration

The “Ball” is an object that a user creates by waving. It can be thrown and caught, and holds a note value that is triggered when it hits the walls of the room. The “Blob” is an object that is created when two or more users group together in close proximity. When they do, a bass note is triggered as an amorphous shape envelops their avatars, and the parameters of the tone are randomly assigned to the different joints making up the shape. The “Box” makes up the virtual environment itself and has various interaction possibilities. The boxes can be turned on and off like buttons, transforming the entire virtual room into a step sequencer allowing for a different level of compositional control. The “Taurus” is created when a user brings there hands together and grows bigger, encircling their avatar, as they pull their hands apart, as seen in some of the pictures below. The Taurus controls the decay of tones created by the Ball objects, allowing the user to dramatically change the composition.

The user creates these different objects and through their interaction they create a composition that is part generative and part improvised performance. The physical and virtual congruence is increased with the addition of robotic elements that are installed above the user, so as the user reaches into the virtual world, the virtual world then reaches back out into the physical. Also present in the physical room are a pair of interfaces that allow for a multi-modal interactive and immersive experience.

Conceptually, The Third Room is a step toward re-imagining how we approach the creation and performance of digital music. In a virtual environment the same laws that govern the creation of physical musical instruments do not apply. In the Third Room, instruments are created and destroyed; they are manipulatable and autonomous; they are real and they are not real. The Third Room attempts to realize a future space, for performance or composition, that exists both physically and virtually, integrating virtual natural interaction with touch and feel.

This piece has been displayed at the CalArts Digital Arts Expo, the Newhall Art Slam, and the 2013 Internation Conference on New Interfaces for Musical Expression in Daejon, South Korea. This piece was also published as a paper at the 2013 NIME conference.

COLIN HONIGMAN CREATIVE TECHNOLOGIST LOS ANGELES